Solving brain dynamics gives rise to flexible machine-learning models

Last year, MIT researchers announced that they had built “liquid” neural networks, inspired by the brains of small species: a class of flexible, robust machine learning models that learn on the job and can adapt to changing conditions, for real-world safety-critical tasks, like driving and flying. The flexibility of these “liquid” neural nets meant boosting the bloodline to our connected world, yielding better decision-making for many tasks involving time-series data, such as brain and heart monitoring, weather forecasting, and stock pricing.

But these models become computationally expensive as their number of neurons and synapses increase and require clunky computer programs to solve their underlying, complicated math. And all of this math, similar to many physical phenomena, becomes harder to solve with size, meaning computing lots of small steps to arrive at a solution.

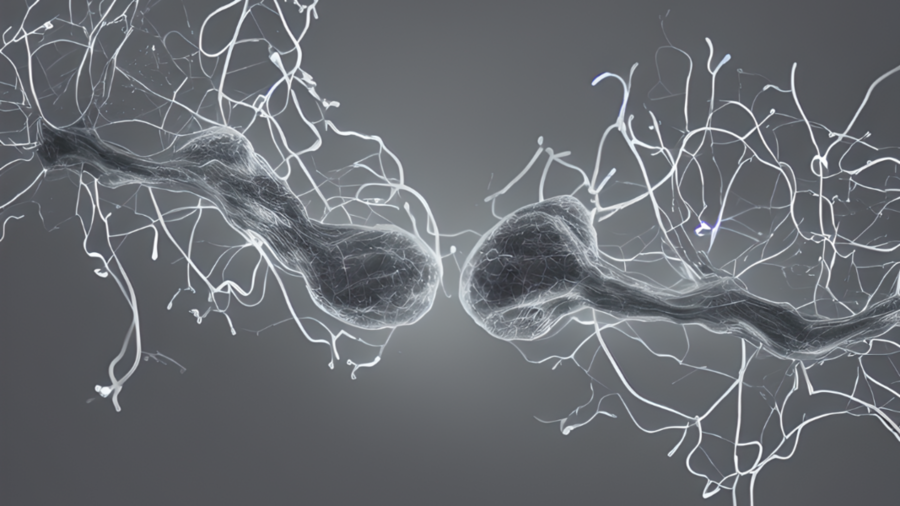

Now, the same team of scientists has discovered a way to alleviate this bottleneck by solving the differential equation behind the interaction of two neurons through synapses to unlock a new type of fast and efficient artificial intelligence algorithms. These modes have the same characteristics of liquid neural nets — flexible, causal, robust, and explainable — but are orders of magnitude faster, and scalable. This type of neural net could therefore be used for any task that involves getting insight into data over time, as they’re compact and adaptable even after training — while many traditional models are fixed.

The models, dubbed a “closed-form continuous-time” (CfC) neural network, outperformed state-of-the-art counterparts on a slew of tasks, with considerably higher speedups and performance in recognizing human activities from motion sensors, modeling physical dynamics of a simulated walker robot, and event-based sequential image processing. On a medical prediction task, for example, the new models were 220 times faster on a sampling of 8,000 patients.

A new paper on the work is published today in Nature Machine Intelligence.

“The new machine-learning models we call ‘CfC’s’ replace the differential equation defining the computation of the neuron with a closed form approximation, preserving the beautiful properties of liquid networks without the need for numerical integration,” says MIT Professor Daniela Rus, director of the Computer Science and Artificial Intelligence Laboratory (CSAIL) and senior author on the new paper. “CfC models are causal, compact, explainable, and efficient to train and predict. They open the way to trustworthy machine learning for safety-critical applications.”

Keeping things liquid

Differential equations enable us to compute the state of the world or a phenomenon as it evolves, but not all the way through time — just step-by-step. To model natural phenomena through time and understand previous and future behavior, like human activity recognition or a robot’s path, for example, the team reached into a bag of mathematical tricks to find just the ticket: a “closed form’” solution that models the entire description of a whole system, in a single compute step.

With their models, one can compute this equation at any time in the future, and at any time in the past. Not only that, but the speed of computation is much faster because you don’t need to solve the differential equation step-by-step.

Imagine an end-to-end neural network that receives driving input from a camera mounted on a car. The network is trained to generate outputs, like the car’s steering angle. In 2020, the team solved this by using liquid neural networks with 19 nodes, so 19 neurons plus a small perception module could drive a car. A differential equation describes each node of that system. With the closed-form solution, if you replace it inside this network, it would give you the exact behavior, as it’s a good approximation of the actual dynamics of the system. They can thus solve the problem with an even lower number of neurons, which means it would be faster and less computationally expensive.

These models can receive inputs as time series (events that happened in time), which could be used for classification, controlling a car, moving a humanoid robot, or forecasting financial and medical events. With all of these various modes, it can also increase accuracy, robustness, and performance, and, importantly, computation speed — which sometimes comes as a trade-off.

Solving this equation has far-reaching implications for advancing research in both natural and artificial intelligence systems. “When we have a closed-form description of neurons and synapses’ communication, we can build computational models of brains with billions of cells, a capability that is not possible today due to the high computational complexity of neuroscience models. The closed-form equation could facilitate such grand-level simulations and therefore opens new avenues of research for us to understand intelligence,” says MIT CSAIL Research Affiliate Ramin Hasani, first author on the new paper.

Source: MIT News